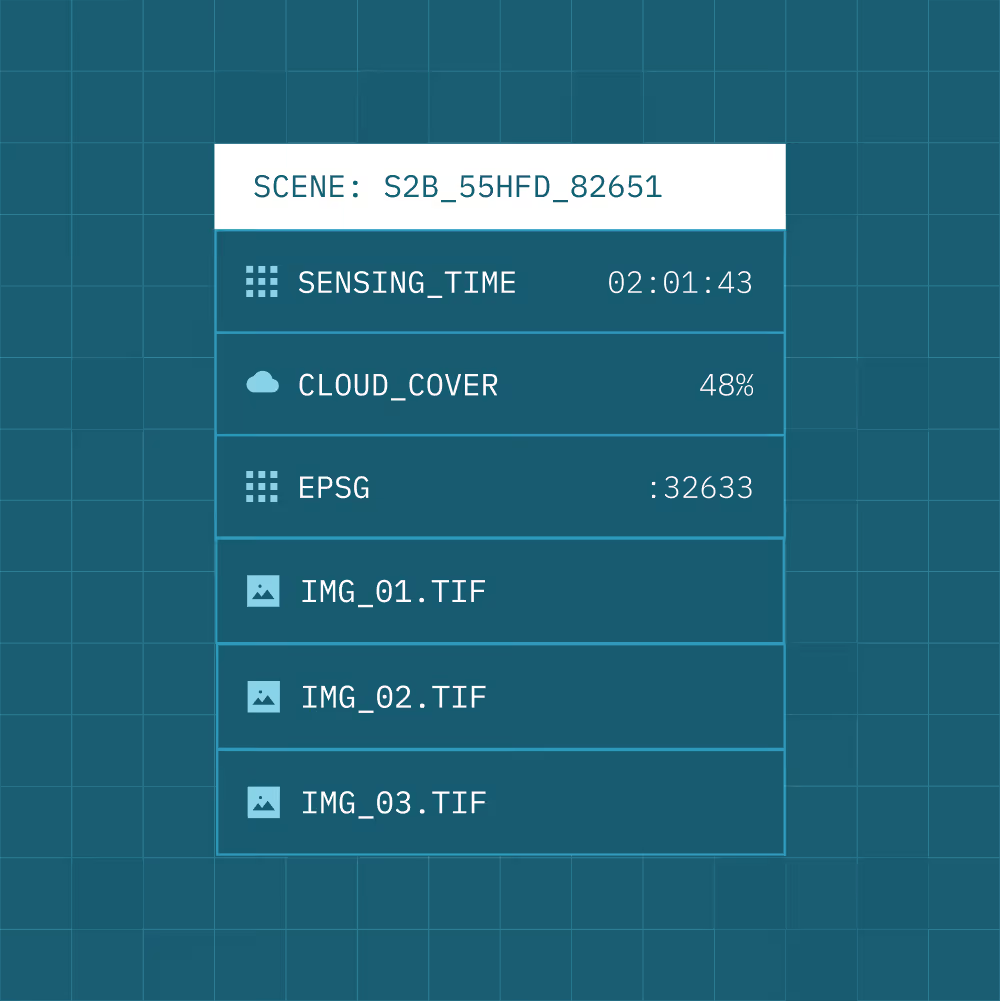

STAC-based structure

Normalise imagery into a consistent, standards-based catalogue.

Cloud Optimised GeoTIFF (COG)

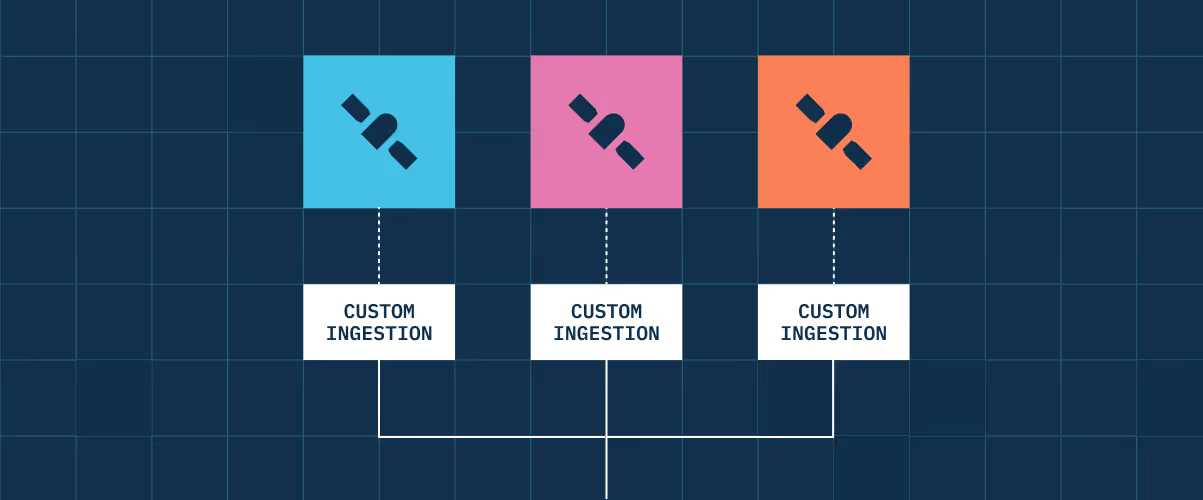

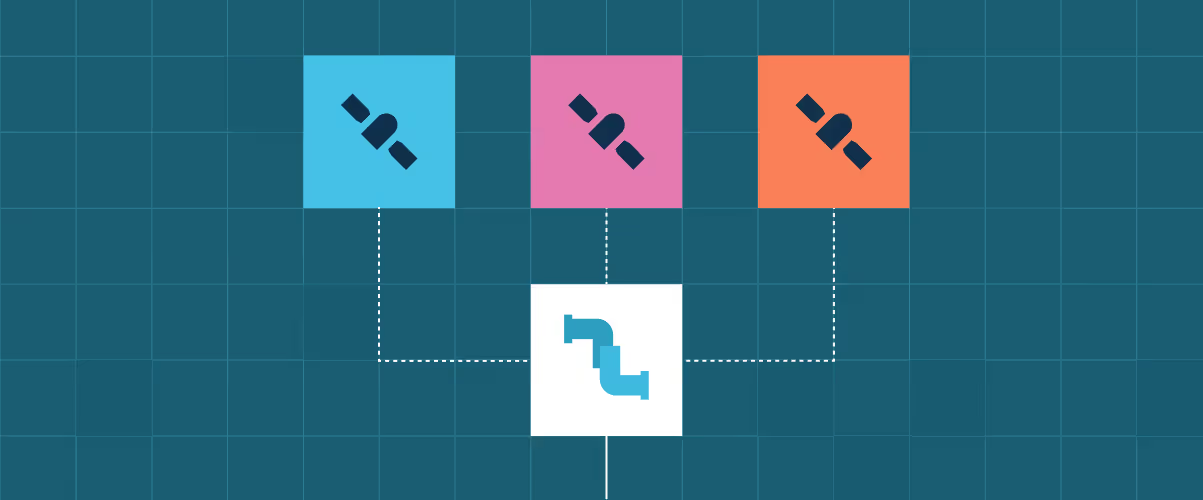

Handle execution order and interdependencies across processing steps.

Quality layers and annotations

Generate and standardise metadata as part of ingestion.

Consistent structure across providers

Eliminate format and schema inconsistencies between sensors and suppliers.

Analysis-ready outputs

Ensure data is ready for analytics and automation pipelines on ingestion.

Accelerated onboarding

Integrate new sensors and missions without custom restructuring.